Last Updated on January 17, 2025 by Satyendra

As you can imagine, a company’s inability to locate their critical assets is a big problem for security. After all, should an attacker gain access to, and disclose a company’s sensitive data, this could be devastating for the company’s reputation and financial well-being. Despite the risks, there’s still a large number of companies who simply don’t know where their data is stored.

According to a report by the Institute of Directors (IoD) and Barclays, as much as 43% of those who took part in the study are not able to identify the location of their critical data, with as much as 59% of respondents outsourcing data storage. Some other studies have confirmed this trend. A 2014 study by the Ponemon Institute revealed that 52% of respondents have a “lack of knowledge regarding data”, while a 2017 report called “The Data Security Money Pit: Expense In Depth Hinders Maturity”, claims that 62% of respondents are not able to identify the location of their most “sensitive unstructured data”.

Lee Fisher, head of security business for EMEA at Juniper Networks, stated that “We estimate that 50-60% of businesses don’t know where their data is”. He also went on to say that “there is a misunderstanding of how to use data and they don’t know how to protect appropriately.”

To make matters worse, 43% of businesses are failing to report their most disruptive data breaches. Only 57% of companies have a formal cyber security strategy in place, while only 49% of companies are providing cyber security training to their staff. And despite the financial risks associated with a data breach, only 20% of companies have any form of cyber insurance. As I’m sure you can appreciate, these figures paint a fairly bleak picture of the current cyber security trends.

How does one go about locating critical data?

To locate both structured and unstructured data, you have to scan your databases for relevant data types. You can use discovery tools to consolidate and present your findings via a centralized pane. Once you have located your critical assets, classify the data, identify which data belongs to a protected category and which does not, and specify who is allowed access to each category.

Precisely remembering the exact location of your files and folders can be a daunting task. In computers running Microsoft Windows, you can locate the required data using File explorer. Type the name of the file in the search box, it looks for matches in the database and eventually displays highlighted results in a search results folder. You can also perform an advanced search which lets you find the data by type, name, location, date, size or property tag.

Locating data in the NetApp Filer can be done using ONTAP API and PowerShell Scripts by providing the exact “path” for the file you are searching. After getting the list of files, you can filter out the required data. As soon as you know where your data is stored, it becomes significantly easier to protect your critical assets.

Sometimes locating a data without knowing its exact location is difficult. So, it is wise to always audit your File Systems. Both Windows File Server and NetApp Filer should be audited proactively for every change in the data; such as file or folder creation, file or folder modification, file or folder deletion or files and folders being moved.

Tracking changes in the location of files and folders using Lepide File Server Auditor

All changes made to the location of data (file and folders) in both Windows File server and NetApp Filer (both 7-Mode and Clustered-Mode) can be tracked and monitored using Lepide File Server Auditor (part of Lepide Data Security Platform). This state-of-the-art solution alerts you (in real-time or on threshold-basis or for both) about modifications in the data location as an email to the concerned authorities and as updates to Lepide Mobile App (available for both Android and Apple).

To keep track of files and folders changes, you can generate real-time and historical reports, which can be scheduled to be delivered either as an email or by saving them to a shared location.

Consider the following scenarios where changes in data location are being audited using the Lepide File Server Auditor:

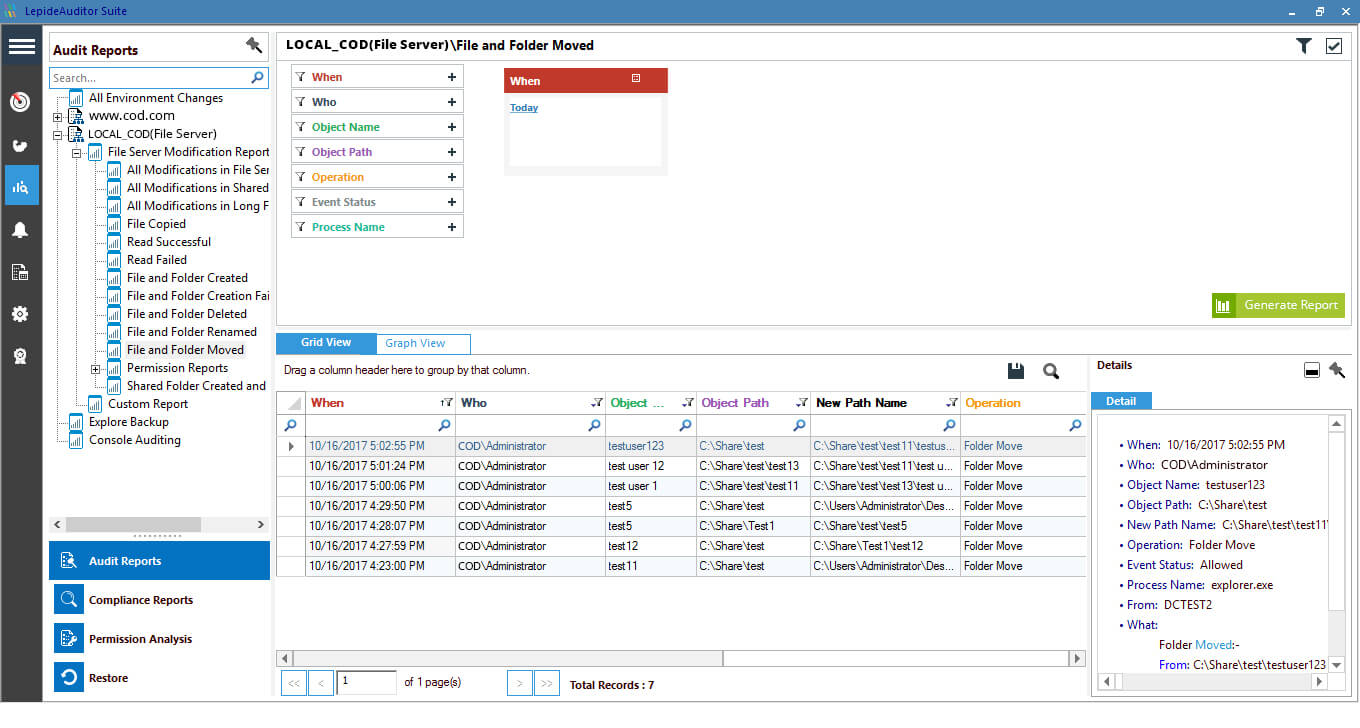

- Both source and target are being monitored: A “File and Folder moved” report is generated whenever any changes are detected in source or target folders, if both are being monitored by our solution.

- Only source is being monitored: A “File and Folder moved” report is generated when changes are spotted at the source location being monitored by our solution.

The following screenshots cover the changes in data location for both of the above listed scenario. This report displays detailed information in grid view and graph view.

Figure 1: File and Folder moved report

Figure 1: File and Folder moved report

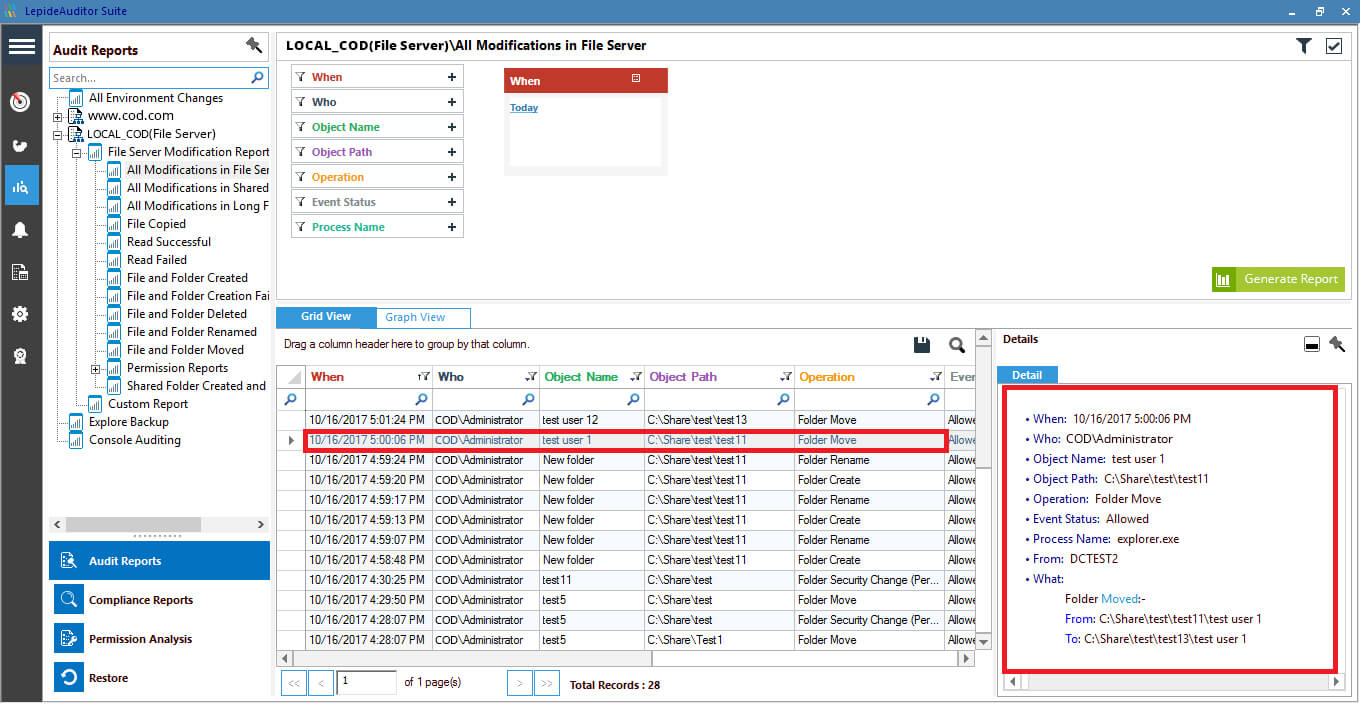

- Only target is being monitored: Lepide’s File Server auditing solution generates “All modifications” report for file/folder activities. This report gives detailed information about all the changes made by users in the file system. Here, you can spot the changes of third case in which only target is being monitored. Changes of this case are displayed as “File Moved” operation. Such a change has been highlighted in the screenshot given below.

Figure 2: All modifications in File Server report